Content transcoding hits mobiles

October 2007

Content adaptation and transcoding is high on the agenda of many small mobile content or services companies at the moment and is causing more bad language and angst than anything else I can remember in the industry in recent times. Before I delve into that issue what is content adaptation?

Content translation and the need for it on the Internet is as old as the invention of the browser and is caused by standards, or I should say the interpretation of them. Although HTML, the language of the web page, transformed the nature of the Internet by enabling anyone to publish and access information through the World Wide Web, there were many areas of the specification that left a sufficient degree of fogginess for browser developers to 'fill in' with their interpretation of how content should be displayed.

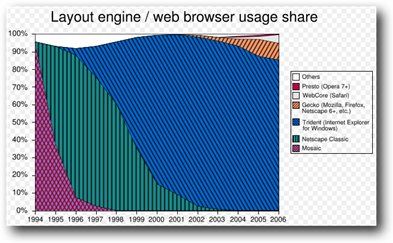

In the early days, most of us engaged with the WWW through the use of the Netscape Navigator browser. Indeed Netscape epitomised all the early enthusiasm for the Internet and their IPO on August 9, 1995 set in play the fabulously exciting 'bubble' of the late 1990s. Indeed, The Netscape browser held over a 90% market share in the years post their IPO.

This inherent market monopoly made it very easy for early web page developers to develop content as it only needed to run on one one browser. However that did not make life particularly easy because the Netscape Navigator browser had so many problems in how it arbitrarily interpreted HTML standards. In practice, a browser is only an interpreter after all and, like human interpreters, are prone to misinterpretation when there are gaps in the standards.

Browser market shares. Source Wikipedia

Content Adaptation

Sometimes the drafted HTML displayed in Navigator fine but at other times it didn't. This led to whole swathes of work-abounds that made the the task of developing interesting content a rather hit and miss affair. A good example of this is the HTML standard that says that the TABLE tag should support a CELLSPACING attribute to define the space between parts of the table. But standards don't define the default value for that attribute, so unless you explicitly define CELLSPACING when building your page, two browsers may use different amounts of white space in your table.

(Credit: NetMechanic) This type of problem was further complicated by the adoption of browser-specific extensions. The original HTML specifications were rather basic and it was quite easy to envision and implement extensions that enabled better presentation of content. Netscape did this with abandon and even invented a web page scripting language that is universal to day - JavaScript (This has nothing to do with Sun's Java language).

Early JavaScript was ridden with problems and from my limited experience of writing in the language most of the time was spent trying to iunderstand why code that looked correct according to the rule book failed to work in practice!

Around this time I remember attending a Microsoft presentation in Reston where Bill Gates spent an hour talking about why Microsoft were not in favour of the internet and why they were not going to create a create a browser themselves. Oh how times change when within a year BG announced that the whole company was going to focus on the Internet and that their browser would be given away free to "kill Netscape".

In fact, I personally lauded Internet Explorer when it hit the market because, in my opinion, it actually worked very well. It was faster than Navigator but more importantly, when you wrote the HTML or JavaScript, the code worked as you expected it to. This made life so much easier. The problem was that you now had to write pages that would run on both browsers or you risked alienating a significant sector of your users. As there still are today, there were many users who blankly refused to change from using Navigator to IE because of their emotional dislike of Microsoft.

From that point on it was downhill for a decade as you had to include browser detection on your web site so that appropriately coded browser-specific and even worse version specific content could be sent to users. Without this, it was just not possible to guarantee that users would be able to see your content. Below is the typical code you had to use:

var browserName=navigator.appName;

if (browserName=="Netscape")

{

alert("Hi Netscape User!");

}

else

{

if (browserName=="Microsoft Internet Explorer")

{

alert("Hi, Explorer User!");

}

If we now fast forward to 2007 the world of browsers has changed tremendously but the problem has not gone away. Although it is less common to detect browser types and send browser-specific code considerable problems still exist in making content display in the same way on all browsers. I can say from practical experience that making an HTML page with extensive style sheets display correctly on Firefox, IE 6 and IE 7 is not a particularly easy and definitely frustrating task!

The need to adapt content to a particular browser was the first example of what is now called content adaptation. Another technology in this space is called content transcoding.

Content transcoding

I first came across true content transcoding

when I was working with the first real implementation of a Video on Demand service in Hong Kong Telecom in the mid 1990s. This was based based on proprietary technology and myself and a

colleague were of the the opinion that it should be based on IP technologies to be future proof. Although we lost that battle we did manage to get Mercury in the UK to base its VoD developments on

IP. Mercury went on to sell its consumer assets to NTL so I'm pleased that the two of us managed to get IP as the basis of broadband video services in the UK at the time.

I first came across true content transcoding

when I was working with the first real implementation of a Video on Demand service in Hong Kong Telecom in the mid 1990s. This was based based on proprietary technology and myself and a

colleague were of the the opinion that it should be based on IP technologies to be future proof. Although we lost that battle we did manage to get Mercury in the UK to base its VoD developments on

IP. Mercury went on to sell its consumer assets to NTL so I'm pleased that the two of us managed to get IP as the basis of broadband video services in the UK at the time.

Around this time, Netscape were keen to move Navigator into the consumer market but it was too bloated to be able to run on a set top box so Netscape created a new division called Navio which created a cut down browser for the set top box consumer market. Their main aim however was to create a range of non-PC Internet access platforms.

This was all part of the anti-PC / Microsoft community that then existed (exists?) in Silicon Valley. Navio morphed into Network Computer Inc. owned by Oracle and went on to build another icon of the time - the network computer. NCI changed its name to Liberate when it IPOed in 1999. Sadly, Liberate went into receivership in the early 2000s but lives on today in the form of SeaChange who bought their assets.

Anyway, sorry for the sidetrack, but it was through Navio that I first came across the need to transcode content as a normal web page just looked awful on a TV set. TV Navigator also transcoded HTML seamlessly into MPEG. The main problems on presenting a web page on a TV were:

Fonts: Text that could be read easily on a PC could often not be read on a TV because the font size was too small or the font was too complex. So, fonts were increased in size and simplified.

Images: Another issue was that as the small amount of memory on an STB meant that the browser needed to be cut down in size to run. One way of achieving this was cut out the number of content types that could be supported. For example, instead of the browser being able to display all picture formats e.g. BMP, GIF, JPG etc it would only render JPG pictures. This meant that pictures taken off the web needed to be converted to JPG at the server or head-end before being sent to the STB.

Rendering and resizing: Liberate automatically resized content to fit on the television screen.

Correcting content: For example, horizontal scrolling is not considered a 'TV-like' property, so content was scaled to fit the horizontal screen dimensions. If more space is needed, vertical scrolling is enabled to allow the viewer to navigate the page. The transcoder would also automatically wrap text that extends outside a given frame's area. In the case of tables, the transcoder would ignore widths specified in HTML if the cell or the table is too wide to fit within the screen dimensions.

In practice, most VoD or IPTV services only offered closed wall garden services at the time so most of the content was specifically developed for an operators VoD service.

WAP and the 'Mobile Internet 'comes along

Content adaptation and transcoding trundled along quite happily in the background as a requirement for displaying content on non-PC platforms for many years until 2007 and the belated advent of open internet access on mobile or cell phones.

In the late 1990s the world was agog with the

Internet which was accessed using personal computers via LANs or dial-up modems. There was clearly an opportunity to bring the 'Internet' to the mobile or cell phone. I have put quotation marks

around the Internet as the mobile industry has never seen the Internet in the same light as PC users.

In the late 1990s the world was agog with the

Internet which was accessed using personal computers via LANs or dial-up modems. There was clearly an opportunity to bring the 'Internet' to the mobile or cell phone. I have put quotation marks

around the Internet as the mobile industry has never seen the Internet in the same light as PC users.

The WAP initiative was aimed at achieving this goal and at least it can be credited with a concept that lives on to this day - Mobile Internet (WAP, GPRS, HSDPA on the move!). Data facilities on mobile phones were really quite crude at the time. Displays were monochrome with a very limited resolution. Moreover, the data rates that were achievable at the time over the air were really very low so this necessitated WAP content standards to take this into account.

WAP was in essence simplified HTML and if a content provider wanted to created a service that could be accessed from a mobile phone then they needed to write it in WAP. Services were very simple as shown in the picture above and could quite easily be navigated using a thumb.

The main point was that is was quite natural for developers to specifically create a web site that could be easily used on a mobile phone. Content adaptation took place in the authoring itself and there was no need for automated transcoding of content. If you accessed a WAP site, it may have been a little slow because of the reliance on GPRS, but services were quite easy and intuitive to use. WAP was extremely basic so it was updated to XHTML which provided improved look and feel features that could be displayed of the quickly improving mobile phones.

In 2007 we are beginning to see phones with full-capability browsers backed up by broadband 3G bearers making Internet access a reality on phones today. Now you may think this is just great, but in practice phones are not PCs by a long chalk. Specifically, we are back to browsers interpreting pages differently and more importantly, the screen sizes on mobile phones are too small to display standard web pages that allow a user to navigate it with ease (Things are changing quite rapidly with Apple's iPhone technology).

Today, as in the early days of WAP, most companies who seriously offer mobile phone content will create a site specifically developed for mobile phone users. Often these sites will have URLs such as m.xxxx.com or xxxx.mobi so that a user can tell that the site is intended for use on a mobile phone.

Although there was a lot of frustration about phones' capabilities everything at the mobile phone party was generally OK.

Mobile phone operators have been under a lot of criticism for as long as anyone can remember about their lack of understanding of the Internet and focusing on providing closed wall-garden services, but that seems to be changing at long last. They have recognised that their phones are now capable of being a reasonable platform to access to the WWW. They have also opened their eyes and realised that there is real revenue to be derived from allowing their users to access the web - albeit in a controlled manner.

When they opened their browsers to the WWW, they realised what this was not without its challenges. In particular, there are so few web sites that have developed sites that could be browsed on a mobile phone. Even more challenging is that the mobile phone content industry can be called embryonic at best with few service providers that are well known. Customers naturally wanted to use the web services and visit the web sites that they use on their PCs. Of course, most of these look dreadful on a mobile phone and cannot be used in practice. Although many of the bigger companies are now beginning to adapt their sites to the mobile, Google and MySpace to name but two, 99.9999% (as many 9s as you wish) of sites are designed for a PC only.

This has made mobile phone operators turn to using content transcoding to keep their users using their data services and hence keep their revenues growing. The transcoder is placed in the network and intercepts users' traffic. If a web page needs to be modified so that it will display 'correctly' on a particular mobile phone, the transcoder will automatically change the web page's content to a layout that it thinks will display correctly. Two of the largest transcoding companies in this space are Openwave and Novarra.

This issue came to the fore recently (September 2007) in a post by Luca Passani on learning that Vodafone had implemented content transcoding by intercepting and modifying the User Agent dialogue that takes place between mobile phone browsers and web sites. From Luca's page, this dialogue is along the lines of:

-

I am a Nokia 6288,

-

I can run Java apps MIDP2-CDLC 1,

-

I support MP3 ringtones

-

...and so on

His concern, quite rightly, is that this is an standard dialogue that goes on across the whole of the WWW that enables a web site to adapt and provide appropriate content to the device requesting it. Without it, they are unable to ensure that their users will get a consistent experience no matter what phone they are using. Incidentally, Luca, provides an open-source XML file called WURFL that contains the capability profile of most mobile phones. This is used by content providers, following a user agent dialogue, to ensure that the content they sent to a phone will run - it contains the core information needed to enable content adaptation.

It is conjectured that, if every mobile operator in the world uses transcoders - and it looks like this is going to be the case - then this will add another layer of confusion to already high challenge of providing content to mobile phones. Not only will content providers have to understand the capabilities of each phone but they will need to understand when and how each operator uses transcoding.

Personally I am against transcoding in this

market and reason why can be seen in this excellent posting by Nigel Choi and Luca Passani. In most

cases, no automatic transcoding of a standard WWW web page can be better than providing a dedicated page written specifically for a mobile phone. Yes, there is a benefit for mobile operators in

that no matter what page a user selects, something will always be displayed. But will that page be usable?

Personally I am against transcoding in this

market and reason why can be seen in this excellent posting by Nigel Choi and Luca Passani. In most

cases, no automatic transcoding of a standard WWW web page can be better than providing a dedicated page written specifically for a mobile phone. Yes, there is a benefit for mobile operators in

that no matter what page a user selects, something will always be displayed. But will that page be usable?

Of course, transcoders should pass through untouched and web site that is tagged by the m.xxxx or the xxxx.mobi URL as that site should be capable of working on any mobile phone, but in these early days of transcoding implementation this is not always happening it seems.

Moreover, the mobile operators say that this situation can be avoided by the 3rd party content providers applying to be on the operators' white list of approved services. If this turns out to be a universal practice then content providers would need to gain approval and get on all the lists of mobile operators in the world - wow! Imagine an equivalent situation on the PC if content providers needed to get approval from all ISPs. Well, you can't can you?

This move represents another aspect of how the control culture of the mobile phone industry comes to the fore in placing their needs before those of 3rd party content providers. This can only damage the 3rd party mobile content and service industry and further hold back the coming of an effective mobile internet. A sad day indeed. Surely, it would be better to play a long game and encourage web sites to create mobile versions of their services?